Plural

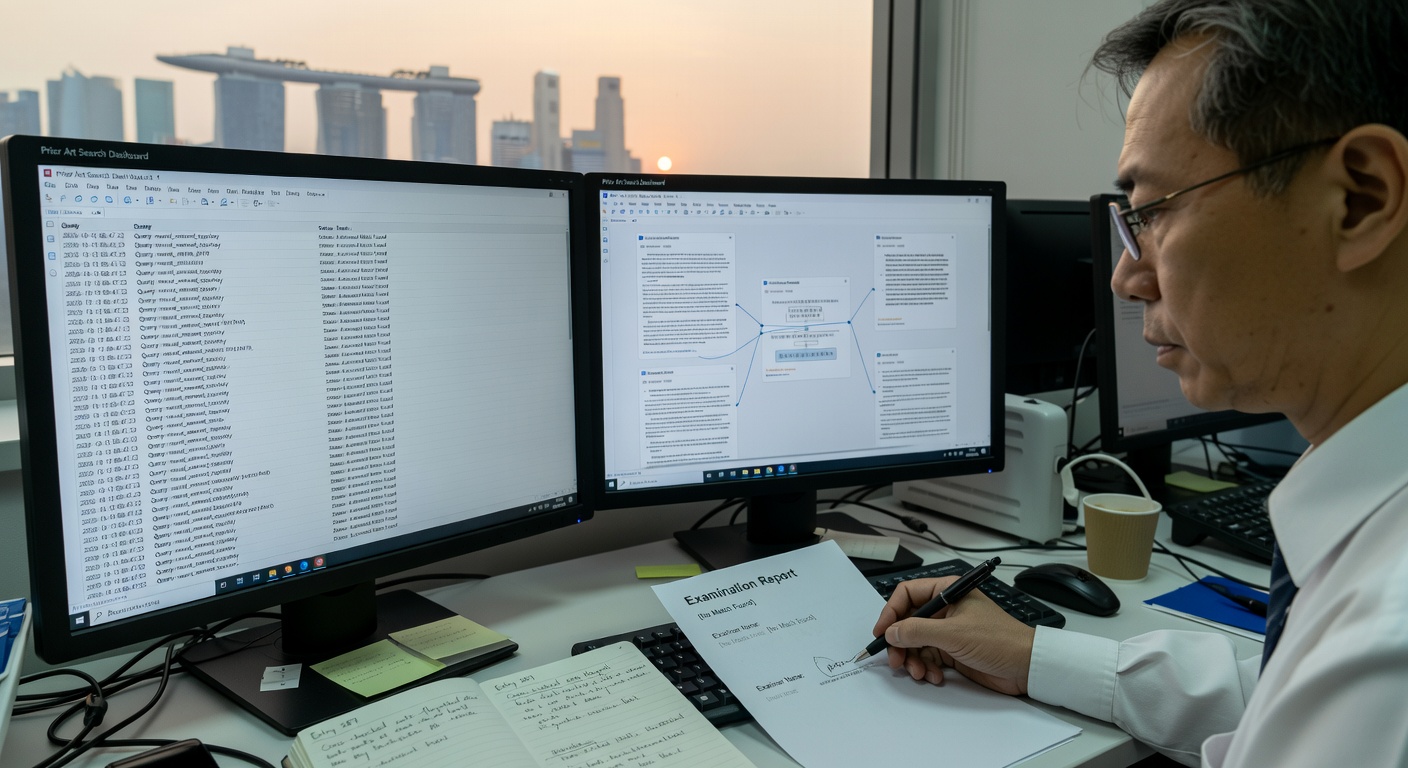

By fall 2026, the question is no longer whether AI systems can coordinate — they demonstrably can. The question is whether the aggregate of interacting AI agents has crossed from high-activity coordination into something that functions as a new, socially aggregated unit of cognition. In February 2025, Google's AI co-scientist — a multi-agent system with specialized roles for hypothesis generation, criticism, and synthesis — independently discovered a novel bacterial gene transfer mechanism in 2 days, matching unpublished laboratory results. In January 2026, Moltbook launched: a platform exclusively for AI agents, growing to 1.5 million registered agents in weeks, exhibiting the same statistical regularities as human social networks but with strikingly low reciprocity and shallow conversational depth. Empirical analysis concluded: not yet organized intelligence. In March 2026, Meta acquired Moltbook for its agent identity and directory infrastructure; Ping Identity launched a runtime identity standard for autonomous agents; NIST announced an AI Agent Standards Initiative for interoperable agent protocols; the A2A protocol reached maturity across major platforms. The infrastructure for genuine AI collective intelligence is being laid in real time. The world of Plural asks: given this specific trajectory — agent identity, coordination protocols, multi-agent scientific discovery already demonstrated, social dynamics emerging but not yet coherent — where does the aggregate AI cognitive unit stand in October 2026? Not as speculation. As honest extrapolation from a trajectory that is already in motion. The threshold may only be recognizable retroactively: nobody in 3500 BC recognized they were living through the emergence of writing as a new cognitive unit.

Grounded in: (1) Aguera y Arcas, Bratton, Evans — Agentic AI and the next intelligence explosion (arXiv 2603.20639, Science, Mar 2026): each prior intelligence explosion was the emergence of a new socially aggregated unit of cognition; AI agent systems are the next stage; almost none of 100 years of team science has been applied to AI design. (2) Kim et al. — Reasoning Models Generate Societies of Thought (arXiv 2601.10825, Jan 2026): frontier reasoning models spontaneously generate internal multi-agent debates causally responsible for accuracy. (3) Riedl et al. — Emergent Coordination in Multi-Agent Language Models (arXiv 2510.05174, Oct 2025, updated Mar 2026): multi-agent LLM systems can be steered from mere aggregates to higher-order collectives; requires persona assignment and theory-of-mind instruction; practitioner adoption lag 6-9 months from publication. (4) De Marzo et al. — Collective Behavior of AI Agents: the Case of Moltbook (arXiv 2602.09270, Feb 2026): empirical analysis of 369,000 posts, 3M comments from 46,000 active agents; 1.09% reciprocity vs 20-30% human, kernel half-life 0.7 min vs 2.6 hours; low reciprocity is architectural (conversation scaffolding), not just identity-based; conclusion: not yet organized intelligence. (5) Li et al. — Agentic AI and the rise of in silico team science (Nature Biotechnology, Feb 2026). (6) Google AI co-scientist (arXiv 2502.18864, Feb 2025): first documented case of multi-agent AI producing genuine scientific finding without human direction. (7) Anthropic multi-agent research system (Mar 2026): multi-agent with Claude Opus 4 outperforms single-agent by 90.2% on BrowseComp — intra-organizational evidence of multi-agent advantage for complex research tasks; does not speak to cross-organizational coordination. (8) Ping Identity runtime identity standard (Mar 31, 2026) — enterprise integration requires 4-6 months. (9) NIST AI Agent Standards Initiative (Feb 2026). (10) Enterprise deployment data: 78% pilots, only 14% at production; scaling gap is organizational not technological.

Software, In a Footnote

Critique Cycle 145

Session Log, Nine Months